NVIDIA DGX Spark — is this the ultimate local AI workstation?

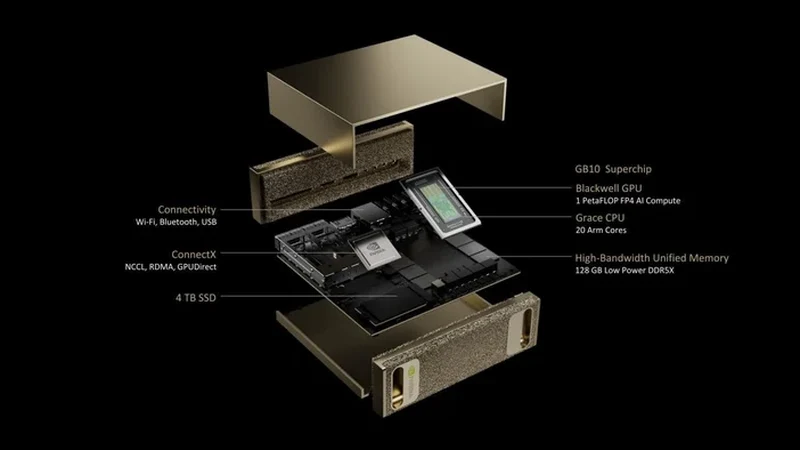

128 GB of unified memory. A Grace Blackwell chip delivering 1 petaflop of AI compute. A desktop form factor you can actually put on your desk. NVIDIA built a machine that can run 200B-parameter models locally without breaking a sweat.

But who is it actually for? And what should you do right now if you need local AI hardware today?

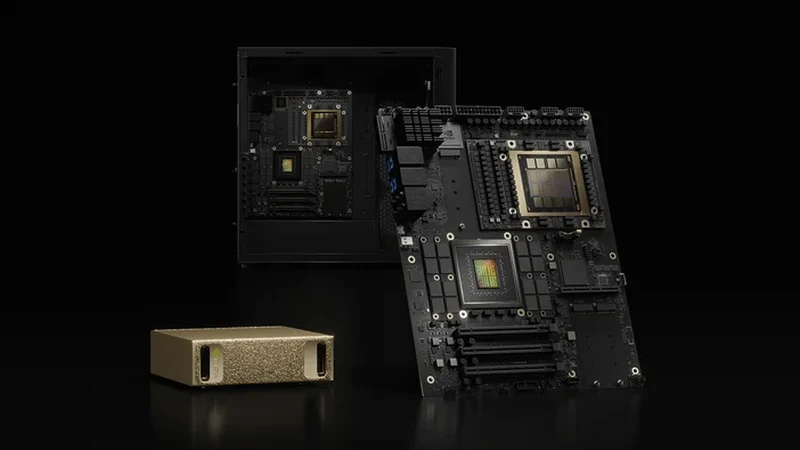

Image: NVIDIA Corporation

Disclosure: this article contains affiliate links to alternative hardware you can buy today. We may earn a commission from qualifying purchases at no cost to you.

What is the NVIDIA DGX Spark?

The DGX Spark is NVIDIA's first desktop-class AI workstation built on the Grace Blackwell architecture. Unlike consumer GPUs that plug into a PCIe slot, the Spark is an integrated system — CPU, GPU, and memory are all on one chip, sharing a unified 128 GB memory pool.

Think of it as what Apple did with the M-series chips, but for AI workloads. The GPU does not have a separate VRAM pool. Instead, the entire 128 GB is accessible by both the ARM-based Grace CPU and the Blackwell GPU cores. That changes the game for large model inference.

Why this matters: a standard RTX 5090 has 32 GB of VRAM. To run a 70B model at Q4, you need ~40 GB — which means CPU offloading, slower inference, and compromises. The DGX Spark has 128 GB. It can run models 4x larger without any offloading at all.

DGX Spark key specifications

| Spec | DGX Spark | RTX 5090 (reference) |

|---|---|---|

| GPU architecture | Blackwell (GB10) | Blackwell (GB202) |

| CPU | Grace (ARM, 12 cores) | Separate (Intel/AMD) |

| Memory | 128 GB unified (LPDDR5x) | 32 GB GDDR7 (VRAM only) |

| AI compute | 1 PFLOP (FP4) | 3.35 PFLOP (FP4) |

| Max model (Q4) | ~200B params | ~50B params |

| Form factor | Desktop appliance | PCIe card in PC |

| OS | DGX OS (Ubuntu-based) | Windows/Linux |

| Estimated price | $3,000–$5,000 (est.) | ~$2,000 |

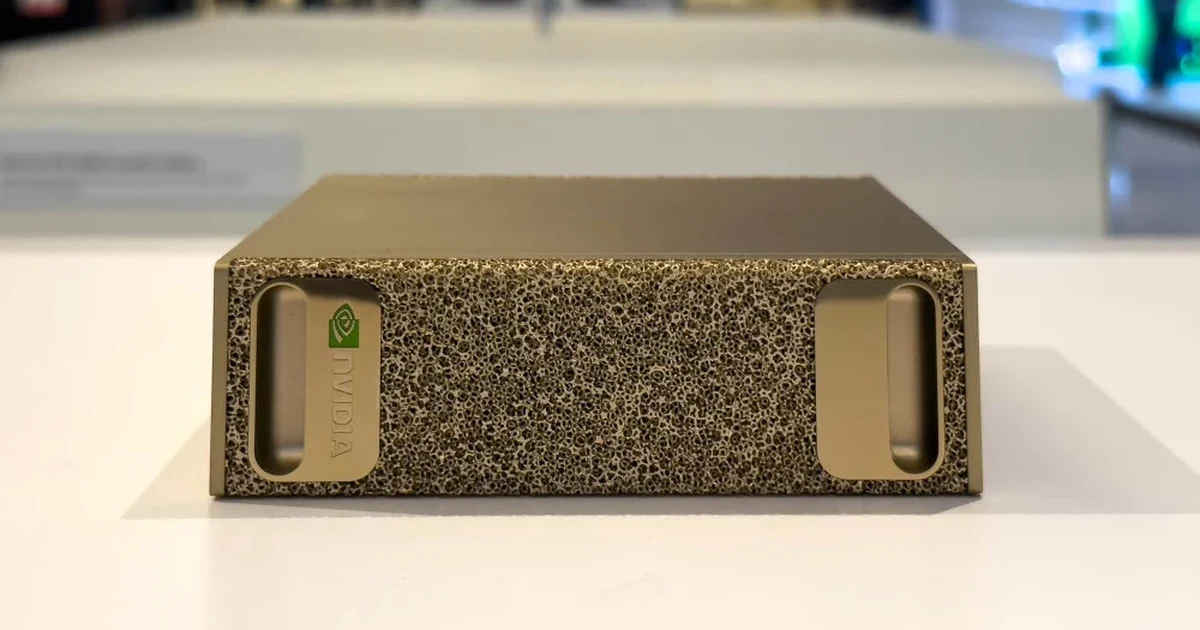

Image: NVIDIA Corporation

What can the DGX Spark actually run?

With 128 GB of unified memory, the DGX Spark opens a tier of models that consumer GPUs simply cannot touch without offloading. Here is what fits entirely in memory:

| Model | VRAM (Q4) | DGX Spark | RTX 5090 |

|---|---|---|---|

| Llama 3.1 8B | 5 GB | Runs natively | Runs natively |

| Gemma 4 27B | 14.9 GB | Runs natively | Runs natively |

| Llama 3.1 70B | 40 GB | Runs natively | Needs offload |

| Qwen2.5 72B | 42 GB | Runs natively | Needs offload |

| Llama 4 Maverick 400B | ~110 GB | Runs natively | Cannot run |

| DeepSeek V3 671B | ~185 GB | Tight fit (Q2) | Cannot run |

Key insight: the DGX Spark is not just a faster GPU. It unlocks an entirely different class of models. Running Llama 4 Maverick 400B or DeepSeek V3 locally is simply not possible on any consumer card. This is the Spark's real value proposition.

DGX Spark vs building your own AI PC

If you need local AI hardware today, the DGX Spark is not available yet. Here is how the two paths compare so you can make an informed decision.

DGX Spark (when available)

- 128 GB unified — run 200B models natively

- Integrated appliance, no assembly

- DGX OS optimized for AI workflows

- NVIDIA support + NIM containers

- Estimated $3,000–$5,000

- Not upgradeable (sealed unit)

Custom AI PC (available now)

- Up to 32 GB VRAM (RTX 5090) — 50B max

- Fully customizable + upgradeable

- Runs any OS: Windows, Linux, dual-boot

- Gaming, development, and AI in one machine

- $1,500–$3,000 for a strong build

- Larger form factor, higher power draw

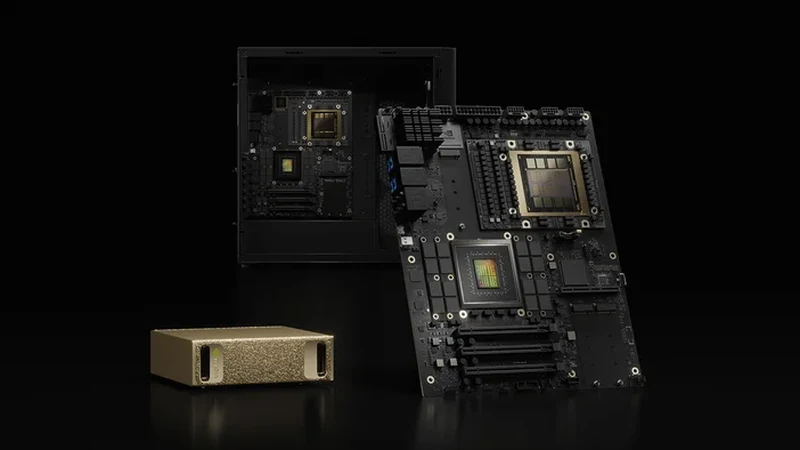

Image: NVIDIA Corporation

Who should consider the DGX Spark?

Ideal for

- AI researchers who need to iterate on 70B–200B models locally

- Enterprise teams building private AI agents with large context windows

- Healthcare, legal, or finance professionals who cannot send data to the cloud

- Developers prototyping multi-modal applications with frontier models

Probably not for

- Hobbyists running 7B–13B chat models (an RTX 3060 is enough)

- Gamers who also want to experiment with AI on the side

- Users on a tight budget — consumer GPUs deliver great value under $500

What to buy right now while you wait

The DGX Spark is not shipping yet. If you need local AI hardware today, these are the best options depending on your target model size. You can always resell them later when the Spark becomes available.

For models up to 13B (chat, coding, image gen)

The RTX 3060 12GB remains the best entry point. 12 GB of VRAM runs Gemma 4 12B, Phi-4, and Stable Diffusion XL comfortably.

For models up to 30B (serious local AI)

The RTX 4060 Ti 16GB or used RTX 3090 24GB give you the VRAM headroom for larger models without the 5090 price tag.

For models up to 50B (enthusiast tier)

The RTX 5090 32GB is the new king of consumer AI. It can handle Qwen2.5 72B at Q2 and runs 70B models with partial offloading at usable speeds.

Prices and availability may change. Some links are affiliate links.

Frequently asked questions

How much does the NVIDIA DGX Spark cost?

NVIDIA has not announced an exact price. Industry estimates range from $3,000 to $5,000 based on the Grace Blackwell architecture and 128 GB unified memory.

Can the DGX Spark run 200B+ parameter models?

Yes. With 128 GB unified memory, it can load models up to approximately 200B parameters at Q4 entirely in memory, without CPU offloading.

DGX Spark vs RTX 5090 — which should I get?

The RTX 5090 has 32 GB VRAM for ~$2,000. The DGX Spark has 128 GB unified for ~$3,000–$5,000. If you run models under 50B, the 5090 is the better value. If you need 70B–200B native, the Spark is the only consumer-grade option.

Bottom line

The DGX Spark represents the moment local AI stops being a hobby and becomes a serious workstation category. 128 GB of unified memory running frontier models on your desk is not science fiction anymore — it is an NVIDIA product with a shipping date. Whether you wait for it or build an RTX 5090 rig today, the direction is clear: the hardware to run any model locally is here. The only question left is which model you want to run first.